|

| XOR gate sold by Amazon [1] |

In [2] a question was asked:

How can we solve \[x^TAy = b\] for \(x_i, y_i \in \{-1,+1\}\)?

In this post I will discuss three different formulations:

- A simple quadratic formulation yielding a non-convex MIQCP model

- A MIP model based on a standard linearization

- A MIP model based on a linearization using an xor construct

Test Data

To help with some test models we need some data for \(A\) and \(b\). Here is an instance that actually has a solution (printed with 3 decimals):

---- 13 PARAMETER a

i1 i2 i3 i4 i5

i1 0.998 0.579 0.991 0.762 0.131

i2 0.640 0.160 0.250 0.669 0.435

i3 0.360 0.351 0.131 0.150 0.589

i4 0.831 0.231 0.666 0.776 0.304

i5 0.110 0.502 0.160 0.872 0.265

---- 13 PARAMETER b = 2.222

Notice that solutions are not unique: if we have a solution \((x,y)\) then another solution is \((-x,-y)\).

MIQCP Model

The simplest is to use a Mixed-Integer Quadratically Constrained (MIQCP) model that handles the quadratic equation directly. Of course, in optimization we prefer binary variables \(z \in\{0,1\}\) instead of \(z \in\{-1,+1\}\). That is easily fixed: introduce binary variables \(p_i, q_i\) and write: \[\begin{align}&x_i = 2p_i-1\\&y_i = 2q_i-1\\&p_i,q_i \in\{0,1\}\end{align}\] This maps a binary variable to \(\{-1,+1\}\). So a MIQCP model can look like:

| MIQCP Formulation |

|---|

| \[\begin{align}

& \sum_{i,j} \color{DarkRed} x_i \color{DarkRed}y_j \color{DarkBlue}a_{i,j} = \color{DarkBlue}b \\

& \color{DarkRed}x_{i} = 2\color{DarkRed}p_{i}-1\\

& \color{DarkRed}y_{i} = 2\color{DarkRed}q_{i}-1\\

& \color{DarkRed}p_i, \color{DarkRed}q_i \in\{0,1\} \end{align}\] |

This is a non-convex problem, so you need a global solver like Baron, Couenne or Antigone. Most quadratic solvers only support the much easier, convex case. When expressing this feasibility problem as an optimization problem, we need to add a dummy objective. The solution can look like:

---- 55 VARIABLE p.L

i1 1.000, i4 1.000, i5 1.000

---- 55 VARIABLE q.L

i1 1.000, i2 1.000, i4 1.000

---- 55 VARIABLE x.L

i1 1.000, i2 -1.000, i3 -1.000, i4 1.000, i5 1.000

---- 55 VARIABLE y.L

i1 1.000, i2 1.000, i3 -1.000, i4 1.000, i5 -1.000

---- 59 PARAMETER b2 = 2.222 x'Ay using solution values

We find a solution that when plugged into \(x^TAy\), reproduces our right-hand side.

Linearization 1

This problem can be linearized. This will allow us to use a linear MIP solver. One way is to write:\[\sum_{i,j} x_i y_j a_{i,j} = \sum_{i,j} (2p_i-1)(2q_j-1) a_{i,j} = \sum_{i,j} \left( 4 p_i q_j - 2 p_i -2q_j + 1 \right) a_{i,j} \]

The binary product \(v_{i,j} = p_i q_j\) can be linearized using a standard formulation [3]: \[\begin{align} &v_{i,j} \le p_i\\ &v_{i,j} \le q_j \\ & v_{i,j} \ge p_i + q_j -1 \\ & p_i, q_j, v_{i,j} \in \{0,1\} \end{align}\] Combining this leads to:

| MIP Formulation I |

|---|

| \[\begin{align}

& \sum_{i,j} \left( 4 \color{DarkRed} v_{i,j} - 2 \color{DarkRed}p_i -2 \color{DarkRed} q_j + 1 \right) \color{DarkBlue} a_{i,j} = \color{DarkBlue}b \\

& \color{DarkRed}v_{i,j} \le \color{DarkRed}p_i\\

& \color{DarkRed}v_{i,j} \le \color{DarkRed}q_j \\

& \color{DarkRed}v_{i,j} \ge \color{DarkRed}p_i + \color{DarkRed}q_j -1 \\

& \color{DarkRed}x_{i} = 2\color{DarkRed}p_{i}-1\\

& \color{DarkRed}y_{i} = 2\color{DarkRed}q_{i}-1\\

& \color{DarkRed}p_i, \color{DarkRed}q_i, \color{DarkRed}v_{i,j} \in\{0,1\} \end{align}\] |

It is noted that \(v\) can be relaxed to be continuous between 0 and 1: \(v_{i,j} \in [0,1]\). The solution can look like:

---- 62 VARIABLE p.L

i1 1.000, i4 1.000, i5 1.000

---- 62 VARIABLE q.L

i1 1.000, i2 1.000, i4 1.000

---- 62 VARIABLE v.L v(i,j)=p(i)*q(j)

i1 i2 i4

i1 1.000 1.000 1.000

i4 1.000 1.000 1.000

i5 1.000 1.000 1.000

---- 62 VARIABLE x.L

i1 1.000, i2 -1.000, i3 -1.000, i4 1.000, i5 1.000

---- 62 VARIABLE y.L

i1 1.000, i2 1.000, i3 -1.000, i4 1.000, i5 -1.000

---- 66 PARAMETER b2 = 2.222 x'Ay using solution values

Note that zeroes are not printed here (so the matrix \(v\) may look a bit strange).

Linearization 2

There is another linearization which is more interesting. For this we will use the binary

xor operator.

There are different ways

xor is denoted. I believe the most common ones are \[\begin{align} & z = x \textbf{ xor } y\\ & z = \textbf{xor}(x,y) \\ & z = x \oplus y \\ & z = x \veebar y\end{align}\] The

xor truth table is: \[\begin{matrix} \textbf{ x } & \textbf{ y } & \textbf{ z }\\ 0 & 0 & 0 \\ 0 & 1 & 1 \\ 1 & 0 & 1\\ 1 & 1 & 0

\end{matrix}\]I.e. \(z = x \textbf{ xor } y \) is 1 if and only if \(x\) and \(y\) are different.

Now consider the following thruth-table:

---- 15 PARAMETER t truth-table

x y x*y p q p xor q 1 - 2 xor

i1 -1 -1 1 1

i2 -1 1 -1 1 1 -1

i3 1 -1 -1 1 1 -1

i4 1 1 1 1 1 1

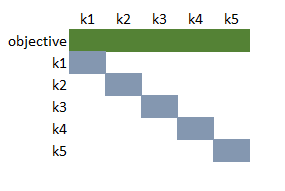

From this we can see that \[x_i y_j = 1 - 2 (p_i \textbf{ xor } q_j) \] So our quadratic constraint becomes \[\sum_{i,j} x_i y_j a_{i,j} = \sum_{i,j} \left( 1 - 2 (p_i \textbf{ xor } q_j) \right) a_{i,j} = b\] The operation \(w_{i,j} = p_i \textbf{ xor } q_j\) can be linearized [4]: \[\begin{align} & w_{i,j} \le p_i + q_j \\& w_{i,j} \ge p_i - q_j \\& w_{i,j} \ge q_j - p_i \\& w_{i,j} \le 2 - p_i - q_j \\ & p_i, q_j, w_{i,j} \in \{0,1\} \end{align}\] With these tools we can formulate a different linearization:

| MIP Formulation II |

|---|

| \[\begin{align}

& \sum_{i,j} \left( 1 - 2 \color{DarkRed} w_{i,j} \right) \color{DarkBlue} a_{i,j} = \color{DarkBlue}b \\

& \color{DarkRed}w_{i,j} \le \color{DarkRed}p_i + \color{DarkRed}q_j\\

& \color{DarkRed}w_{i,j} \ge \color{DarkRed}p_i - \color{DarkRed}q_j \\

& \color{DarkRed}w_{i,j} \ge \color{DarkRed}q_j - \color{DarkRed}p_i \\

& \color{DarkRed}w_{i,j} \le 2 - \color{DarkRed}p_i - \color{DarkRed}q_j \\

& \color{DarkRed}x_{i} = 2\color{DarkRed}p_{i}-1\\

& \color{DarkRed}y_{i} = 2\color{DarkRed}q_{i}-1\\

& \color{DarkRed}p_i, \color{DarkRed}q_i, \color{DarkRed}w_{i,j} \in\{0,1\} \end{align}\] |

It is noted that \(w\) can be relaxed to be continuous between 0 and 1: \(w_{i,j} \in [0,1]\). A solution can look like:

---- 62 VARIABLE p.L

i1 1.000, i4 1.000, i5 1.000

---- 62 VARIABLE q.L

i1 1.000, i2 1.000, i4 1.000

---- 62 VARIABLE w.L w(i,j) = p(i) xor q(j)

i1 i2 i3 i4 i5

i1 1.000 1.000

i2 1.000 1.000 1.000

i3 1.000 1.000 1.000

i4 1.000 1.000

i5 1.000 1.000

---- 62 VARIABLE x.L

i1 1.000, i2 -1.000, i3 -1.000, i4 1.000, i5 1.000

---- 62 VARIABLE y.L

i1 1.000, i2 1.000, i3 -1.000, i4 1.000, i5 -1.000

---- 66 PARAMETER b2 = 2.222 x'Ay using solution values

Conclusion

I am somewhat surprised how different the two linearizations look. You would not easily recognize that the two different linear formulations are essentially the same. I don't remember many times I could use an

xor operation in an optimization (I used it to solve Takuzu puzzles in [5]). The second linearization earns extra points for using

xor!

This question was more interesting than I anticipated.

References

- A Fairchild/ON Semiconductor XOR gate sold by Amazon for $1.69. https://www.amazon.com/Logic-Gates-2-Input-XOR-Gate/dp/B00MEKUZV8

- Matrix Equation & Integer Programming, https://math.stackexchange.com/questions/3047086/matrix-equation-integer-programming/3047835

- Multiplication of Binary Variables, https://yetanothermathprogrammingconsultant.blogspot.com/2008/05/multiplication-of-binary-variables.html

- XOR as linear inequalities, https://yetanothermathprogrammingconsultant.blogspot.com/2016/02/xor-as-linear-inequalities.html

- Solving Takuzu puzzles as a MIP using xor, https://yetanothermathprogrammingconsultant.blogspot.com/2017/01/solving-takuzu-puzzles-as-mip-using-xor.html