The performance of real-world, large-scale models can be relatively low with Python approaches. I suspect this is because this is essentially a “scalar” based approach behind a high-level syntax: each individual constraint and variable leads to objects being created.

From: https://software.sandia.gov/trac/coopr/raw-attachment/wiki/Pyomo/pyomo-jnl.pdf :

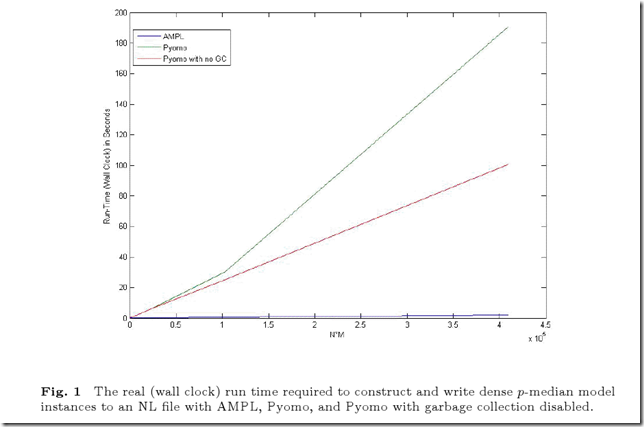

- up to 62 times slower (32 times when garbage collection is disabled) than AMPL on a large, dense p-median problem

- 6 times slower than GAMS on a complex production/ transportation model

I don’t think this performance hit is mainly a result of Python vs. C/C++, but rather a result of the abstraction level of the modeling framework. It would need to be lifted to blocks of variables, equations and parameters instead of individual variables and equations. I suspect that a “proper” implementation of such a modeling framework (which is more complex than what is done here) would be able to achieve AMPL- and GAMS-like performance

This discussion might interest you :

ReplyDeletehttp://www.or-exchange.com/questions/2649/suitable-software-for-optimization-problem?page=1#2728

Even if you lift the abstraction level to "blocks" of variables, constraints, etc (as AMPL does) at some point you'll need to lower the representation to the solver level.

ReplyDeleteA quick update on Figure 1 that might interest folks. First, we have reduced the run-time for the largest N*M value to 67 seconds, down from ~100 seconds. Second, we have also eliminated the difference in times between the cases when garbage collection is and is not disabled.

ReplyDeleteA good chunk of the Pyomo versus AMPL differences that remain are actually due to file I/O - specifically, 70% of the run-time is dedicated to just writing the NL file. Python I/O is known to be rather slow, and we are definitely seeing this in our experiments.

In terms of pure model instantiation, we have no indication that the remaining (small) performance difference between AMPL and Pyomo is due to the abstraction level of the modeling framework. Rather, there remain a few hot-spots that have much more to do with Python vs. C++ than anything else.

The difference in gc versus no-gc times was due to us not explicitly breaking reference cycles in the modeling components. Now that we do this, the garbage collector has very little work to do.

As another data point: in the relatively large example from a collaborator at Naval Postgraduate School (referenced above), we have a direct GAMS vs. Pyomo end-to-end test (instantiate, solve, load solution, etc). The performance difference there is 3x.

Finally, I'd like to point out that once a Python-based package is within 5-10x of a C/C++-based, commercial package, there really isn't much motivation to close the gap. There are very different motivations for using the different packages. This isn't to say, having stared at performance profiles for many a month, that it is impossible. Rather, I don't think it's worth the effort, and most certainly not worth the decrease in maintainability of the software that would result.